Also see

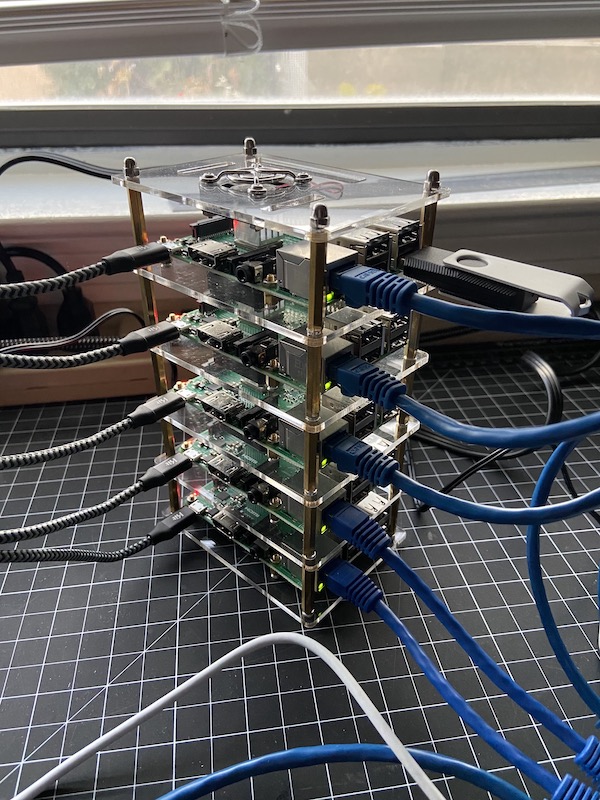

- Hi-Five-Pi: Raspberry Pi Cluster Part 1 – Initial Hardware/Software Setup

- Hi-Five-Pi: Raspberry Pi Cluster Part 2 – Shared Users and Maintenance Software

In my first two tutorials, I set up a five-node Raspberry Pi cluster, installed Slurm for scheduling jobs, and configured users, storage, and administrative tools. In this post, I’m going to walk through running small sample programs on the cluster and point to the getting started guides I referenced when learning parallel programming.

There are two ways to run jobs on a Slurm managed cluster. The sbatch command will submit computing jobs to the queue to be run according to priority once resources are available. srun will run jobs and programs interactively and can be used in combination with the salloc command to reserve an allocation of resources (e.g. nodes, memory). Let’s try both.

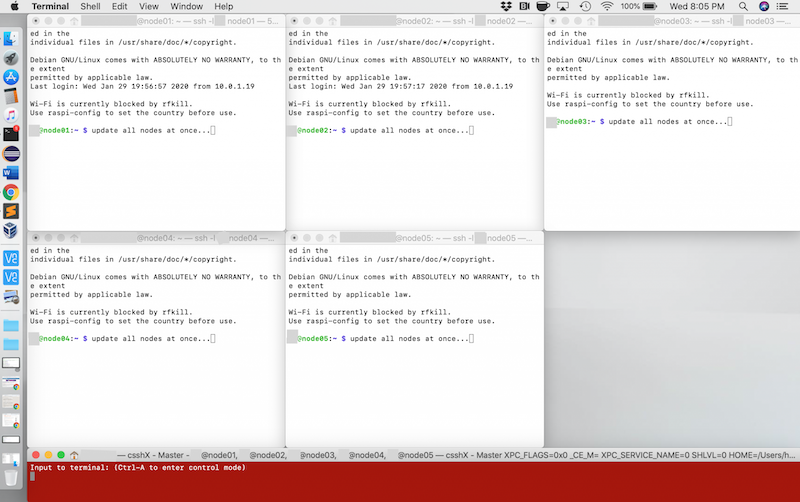

Check Node Status

If this part fails, try rebooting all nodes and review notes from the first two tutorials.

- SSH to master node

- Run sinfo to see your partition information.

- Run a job on four nodes to make them print their hostname:

- srun –nodes=4 hostname

Download Sample Programs and Compile Code

- cd /scratch

- git clone https://github.com/mpitutorial/mpitutorial.git

- cd mpitutorial/tutorials/mpi-hello-world/code

- make

Run Hello World Program Interactively

- salloc -N 4 #this requests a 4 node allocation

- mpiexec -n 4 mpi_hello_world

- exit #exits node allocation

Create Slurm Submission Scripts

- nano test1.sh

- #!/bin/bash #SBATCH –job-name=test_mpi1 #SBATCH –time=1:00 #SBATCH -N 4 #SBATCH –ntasks-per-node=4 mpiexec -n 16 mpi_hello_world

- chmod 700 test1.sh

- nano test2.sh

- #!/bin/bash #SBATCH –job-name=test_mpi1 #SBATCH –time=1:00 #SBATCH -N 2 #SBATCH –ntasks-per-node=4 mpiexec -n 8 mpi_hello_world

- chmod 700 test2.sh

Submit Batch Jobs

- sbatch test1.sh

- sbatch test2.sh

- sbatch test2.sh

- sbatch test2.sh

- sbatch test1.sh

- squeue #check queue although jobs will likely finish too quickly to view them in the queue

View Output

- ls

- slurm-35.out slurm-36.out slurm-37.out slurm-38.out slurm-39.out slurm-40.out

- cat slurm-XX.out